mirror of

https://github.com/sismics/docs.git

synced 2025-04-21 02:46:34 +02:00

Compare commits

No commits in common. "master" and "v1.9" have entirely different histories.

84

.github/workflows/build-deploy.yml

vendored

84

.github/workflows/build-deploy.yml

vendored

@ -1,84 +0,0 @@

|

||||

name: Maven CI/CD

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [master]

|

||||

tags: [v*]

|

||||

workflow_dispatch:

|

||||

|

||||

jobs:

|

||||

build_and_publish:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Set up JDK 11

|

||||

uses: actions/setup-java@v2

|

||||

with:

|

||||

java-version: "11"

|

||||

distribution: "temurin"

|

||||

cache: maven

|

||||

- name: Install test dependencies

|

||||

run: sudo apt-get update && sudo apt-get -y -q --no-install-recommends install ffmpeg mediainfo tesseract-ocr tesseract-ocr-deu

|

||||

- name: Build with Maven

|

||||

run: mvn --batch-mode -Pprod clean install

|

||||

- name: Upload war artifact

|

||||

uses: actions/upload-artifact@v2

|

||||

with:

|

||||

name: docs-web-ci.war

|

||||

path: docs-web/target/docs*.war

|

||||

|

||||

build_docker_image:

|

||||

name: Publish to Docker Hub

|

||||

runs-on: ubuntu-latest

|

||||

needs: [build_and_publish]

|

||||

|

||||

steps:

|

||||

-

|

||||

name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

-

|

||||

name: Download war artifact

|

||||

uses: actions/download-artifact@v2

|

||||

with:

|

||||

name: docs-web-ci.war

|

||||

path: docs-web/target

|

||||

-

|

||||

name: Setup up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

-

|

||||

name: Login to DockerHub

|

||||

if: github.event_name != 'pull_request'

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

-

|

||||

name: Populate Docker metadata

|

||||

id: metadata

|

||||

uses: docker/metadata-action@v3

|

||||

with:

|

||||

images: sismics/docs

|

||||

flavor: |

|

||||

latest=false

|

||||

tags: |

|

||||

type=ref,event=tag

|

||||

type=raw,value=latest,enable=${{ github.ref_type != 'tag' }}

|

||||

labels: |

|

||||

org.opencontainers.image.title = Teedy

|

||||

org.opencontainers.image.description = Teedy is an open source, lightweight document management system for individuals and businesses.

|

||||

org.opencontainers.image.created = ${{ github.event_created_at }}

|

||||

org.opencontainers.image.author = Sismics

|

||||

org.opencontainers.image.url = https://teedy.io/

|

||||

org.opencontainers.image.vendor = Sismics

|

||||

org.opencontainers.image.license = GPLv2

|

||||

org.opencontainers.image.version = ${{ github.event_head_commit.id }}

|

||||

-

|

||||

name: Build and push

|

||||

id: docker_build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: .

|

||||

push: ${{ github.event_name != 'pull_request' }}

|

||||

tags: ${{ steps.metadata.outputs.tags }}

|

||||

labels: ${{ steps.metadata.outputs.labels }}

|

||||

5

.gitignore

vendored

5

.gitignore

vendored

@ -14,8 +14,3 @@ import_test

|

||||

teedy-importer-linux

|

||||

teedy-importer-macos

|

||||

teedy-importer-win.exe

|

||||

docs/*

|

||||

!docs/.gitkeep

|

||||

|

||||

#macos

|

||||

.DS_Store

|

||||

|

||||

33

.travis.yml

Normal file

33

.travis.yml

Normal file

@ -0,0 +1,33 @@

|

||||

sudo: required

|

||||

dist: trusty

|

||||

language: java

|

||||

before_install:

|

||||

- sudo add-apt-repository -y ppa:mc3man/trusty-media

|

||||

- sudo apt-get -qq update

|

||||

- sudo apt-get -y -q install ffmpeg mediainfo tesseract-ocr tesseract-ocr-fra tesseract-ocr-ita tesseract-ocr-kor tesseract-ocr-rus tesseract-ocr-ukr tesseract-ocr-spa tesseract-ocr-ara tesseract-ocr-hin tesseract-ocr-deu tesseract-ocr-pol tesseract-ocr-jpn tesseract-ocr-por tesseract-ocr-tha tesseract-ocr-jpn tesseract-ocr-chi-sim tesseract-ocr-chi-tra tesseract-ocr-nld tesseract-ocr-tur tesseract-ocr-heb tesseract-ocr-hun tesseract-ocr-fin tesseract-ocr-swe tesseract-ocr-lav tesseract-ocr-dan tesseract-ocr-nor

|

||||

- sudo apt-get -y -q install haveged && sudo service haveged start

|

||||

after_success:

|

||||

- |

|

||||

if [ "$TRAVIS_PULL_REQUEST" == "false" ]; then

|

||||

mvn -Pprod -DskipTests clean install

|

||||

docker login -u $DOCKER_USER -p $DOCKER_PASS

|

||||

export REPO=sismics/docs

|

||||

export TAG=`if [ "$TRAVIS_BRANCH" == "master" ]; then echo "latest"; else echo $TRAVIS_BRANCH ; fi`

|

||||

docker build -f Dockerfile -t $REPO:$COMMIT .

|

||||

docker tag $REPO:$COMMIT $REPO:$TAG

|

||||

docker tag $REPO:$COMMIT $REPO:travis-$TRAVIS_BUILD_NUMBER

|

||||

docker push $REPO

|

||||

cd docs-importer

|

||||

export REPO=sismics/docs-importer

|

||||

export TAG=`if [ "$TRAVIS_BRANCH" == "master" ]; then echo "latest"; else echo $TRAVIS_BRANCH ; fi`

|

||||

docker build -f Dockerfile -t $REPO:$COMMIT .

|

||||

docker tag $REPO:$COMMIT $REPO:$TAG

|

||||

docker tag $REPO:$COMMIT $REPO:travis-$TRAVIS_BUILD_NUMBER

|

||||

docker push $REPO

|

||||

fi

|

||||

env:

|

||||

global:

|

||||

- secure: LRGpjWORb0qy6VuypZjTAfA8uRHlFUMTwb77cenS9PPRBxuSnctC531asS9Xg3DqC5nsRxBBprgfCKotn5S8nBSD1ceHh84NASyzLSBft3xSMbg7f/2i7MQ+pGVwLncusBU6E/drnMFwZBleo+9M8Tf96axY5zuUp90MUTpSgt0=

|

||||

- secure: bCDDR6+I7PmSkuTYZv1HF/z98ANX/SFEESUCqxVmV5Gs0zFC0vQXaPJQ2xaJNRop1HZBFMZLeMMPleb0iOs985smpvK2F6Rbop9Tu+Vyo0uKqv9tbZ7F8Nfgnv9suHKZlL84FNeUQZJX6vsFIYPEJ/r7K5P/M0PdUy++fEwxEhU=

|

||||

- secure: ewXnzbkgCIHpDWtaWGMa1OYZJ/ki99zcIl4jcDPIC0eB3njX/WgfcC6i0Ke9mLqDqwXarWJ6helm22sNh+xtQiz6isfBtBX+novfRt9AANrBe3koCMUemMDy7oh5VflBaFNP0DVb8LSCnwf6dx6ZB5E9EB8knvk40quc/cXpGjY=

|

||||

- COMMIT=${TRAVIS_COMMIT::8}

|

||||

80

Dockerfile

80

Dockerfile

@ -1,75 +1,13 @@

|

||||

FROM ubuntu:22.04

|

||||

LABEL maintainer="b.gamard@sismics.com"

|

||||

FROM sismics/ubuntu-jetty:9.4.12-2

|

||||

MAINTAINER b.gamard@sismics.com

|

||||

|

||||

# Run Debian in non interactive mode

|

||||

ENV DEBIAN_FRONTEND noninteractive

|

||||

RUN apt-get update && apt-get -y -q install ffmpeg mediainfo tesseract-ocr tesseract-ocr-fra tesseract-ocr-ita tesseract-ocr-kor tesseract-ocr-rus tesseract-ocr-ukr tesseract-ocr-spa tesseract-ocr-ara tesseract-ocr-hin tesseract-ocr-deu tesseract-ocr-pol tesseract-ocr-jpn tesseract-ocr-por tesseract-ocr-tha tesseract-ocr-jpn tesseract-ocr-chi-sim tesseract-ocr-chi-tra tesseract-ocr-nld tesseract-ocr-tur tesseract-ocr-heb tesseract-ocr-hun tesseract-ocr-fin tesseract-ocr-swe tesseract-ocr-lav tesseract-ocr-dan tesseract-ocr-nor && \

|

||||

apt-get clean && rm -rf /var/lib/apt/lists/*

|

||||

|

||||

# Configure env

|

||||

ENV LANG C.UTF-8

|

||||

ENV LC_ALL C.UTF-8

|

||||

ENV JAVA_HOME /usr/lib/jvm/java-11-openjdk-amd64/

|

||||

ENV JAVA_OPTIONS -Dfile.encoding=UTF-8 -Xmx1g

|

||||

ENV JETTY_VERSION 11.0.20

|

||||

ENV JETTY_HOME /opt/jetty

|

||||

# Remove the embedded javax.mail jar from Jetty

|

||||

RUN rm -f /opt/jetty/lib/mail/javax.mail.glassfish-*.jar

|

||||

|

||||

# Install packages

|

||||

RUN apt-get update && \

|

||||

apt-get -y -q --no-install-recommends install \

|

||||

vim less procps unzip wget tzdata openjdk-11-jdk \

|

||||

ffmpeg \

|

||||

mediainfo \

|

||||

tesseract-ocr \

|

||||

tesseract-ocr-ara \

|

||||

tesseract-ocr-ces \

|

||||

tesseract-ocr-chi-sim \

|

||||

tesseract-ocr-chi-tra \

|

||||

tesseract-ocr-dan \

|

||||

tesseract-ocr-deu \

|

||||

tesseract-ocr-fin \

|

||||

tesseract-ocr-fra \

|

||||

tesseract-ocr-heb \

|

||||

tesseract-ocr-hin \

|

||||

tesseract-ocr-hun \

|

||||

tesseract-ocr-ita \

|

||||

tesseract-ocr-jpn \

|

||||

tesseract-ocr-kor \

|

||||

tesseract-ocr-lav \

|

||||

tesseract-ocr-nld \

|

||||

tesseract-ocr-nor \

|

||||

tesseract-ocr-pol \

|

||||

tesseract-ocr-por \

|

||||

tesseract-ocr-rus \

|

||||

tesseract-ocr-spa \

|

||||

tesseract-ocr-swe \

|

||||

tesseract-ocr-tha \

|

||||

tesseract-ocr-tur \

|

||||

tesseract-ocr-ukr \

|

||||

tesseract-ocr-vie \

|

||||

tesseract-ocr-sqi \

|

||||

&& apt-get clean && \

|

||||

rm -rf /var/lib/apt/lists/*

|

||||

RUN dpkg-reconfigure -f noninteractive tzdata

|

||||

ADD docs.xml /opt/jetty/webapps/docs.xml

|

||||

ADD docs-web/target/docs-web-*.war /opt/jetty/webapps/docs.war

|

||||

|

||||

# Install Jetty

|

||||

RUN wget -nv -O /tmp/jetty.tar.gz \

|

||||

"https://repo1.maven.org/maven2/org/eclipse/jetty/jetty-home/${JETTY_VERSION}/jetty-home-${JETTY_VERSION}.tar.gz" \

|

||||

&& tar xzf /tmp/jetty.tar.gz -C /opt \

|

||||

&& mv /opt/jetty* /opt/jetty \

|

||||

&& useradd jetty -U -s /bin/false \

|

||||

&& chown -R jetty:jetty /opt/jetty \

|

||||

&& mkdir /opt/jetty/webapps \

|

||||

&& chmod +x /opt/jetty/bin/jetty.sh

|

||||

|

||||

EXPOSE 8080

|

||||

|

||||

# Install app

|

||||

RUN mkdir /app && \

|

||||

cd /app && \

|

||||

java -jar /opt/jetty/start.jar --add-modules=server,http,webapp,deploy

|

||||

|

||||

ADD docs.xml /app/webapps/docs.xml

|

||||

ADD docs-web/target/docs-web-*.war /app/webapps/docs.war

|

||||

|

||||

WORKDIR /app

|

||||

|

||||

CMD ["java", "-jar", "/opt/jetty/start.jar"]

|

||||

ENV JAVA_OPTIONS -Xmx1g

|

||||

112

README.md

112

README.md

@ -3,7 +3,7 @@

|

||||

</h3>

|

||||

|

||||

[](https://www.gnu.org/licenses/old-licenses/gpl-2.0.en.html)

|

||||

[](https://github.com/sismics/docs/actions/workflows/build-deploy.yml)

|

||||

[](http://travis-ci.org/sismics/docs)

|

||||

|

||||

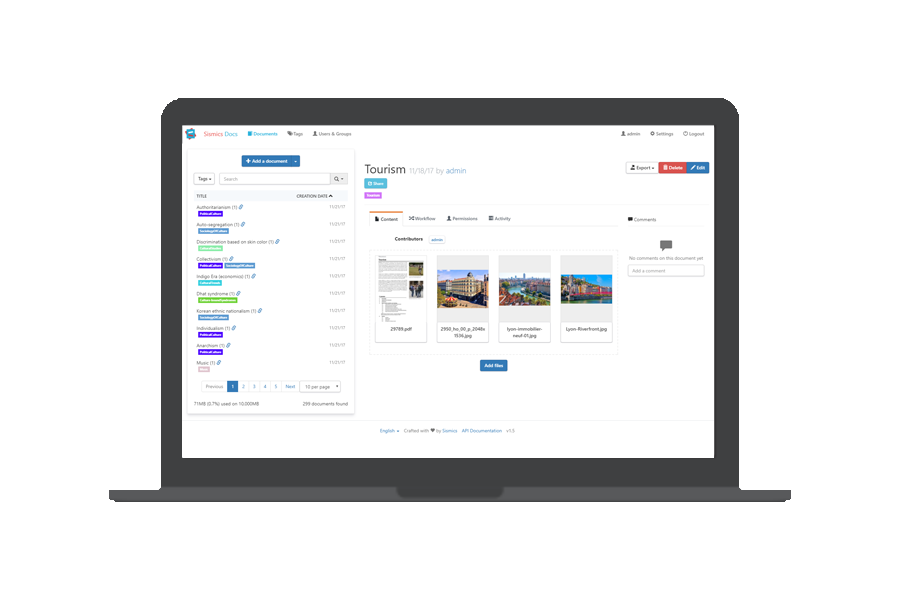

Teedy is an open source, lightweight document management system for individuals and businesses.

|

||||

|

||||

@ -15,7 +15,8 @@ Teedy is an open source, lightweight document management system for individuals

|

||||

|

||||

|

||||

|

||||

# Demo

|

||||

Demo

|

||||

----

|

||||

|

||||

A demo is available at [demo.teedy.io](https://demo.teedy.io)

|

||||

|

||||

@ -23,7 +24,8 @@ A demo is available at [demo.teedy.io](https://demo.teedy.io)

|

||||

- "admin" login with "admin" password

|

||||

- "demo" login with "password" password

|

||||

|

||||

# Features

|

||||

Features

|

||||

--------

|

||||

|

||||

- Responsive user interface

|

||||

- Optical character recognition

|

||||

@ -53,20 +55,21 @@ A demo is available at [demo.teedy.io](https://demo.teedy.io)

|

||||

- [Bulk files importer](https://github.com/sismics/docs/tree/master/docs-importer) (single or scan mode)

|

||||

- Tested to one million documents

|

||||

|

||||

# Install with Docker

|

||||

Install with Docker

|

||||

-------------------

|

||||

|

||||

A preconfigured Docker image is available, including OCR and media conversion tools, listening on port 8080. If no PostgreSQL config is provided, the database is an embedded H2 database. The H2 embedded database should only be used for testing. For production usage use the provided PostgreSQL configuration (check the Docker Compose example)

|

||||

A preconfigured Docker image is available, including OCR and media conversion tools, listening on port 8080. The database is an embedded H2 database but PostgreSQL is also supported for more performance.

|

||||

|

||||

**The default admin password is "admin". Don't forget to change it before going to production.**

|

||||

|

||||

- Master branch, can be unstable. Not recommended for production use: `sismics/docs:latest`

|

||||

- Latest stable version: `sismics/docs:v1.11`

|

||||

- Latest stable version: `sismics/docs:v1.8`

|

||||

|

||||

The data directory is `/data`. Don't forget to mount a volume on it.

|

||||

|

||||

To build external URL, the server is expecting a `DOCS_BASE_URL` environment variable (for example https://teedy.mycompany.com)

|

||||

|

||||

## Available environment variables

|

||||

### Available environment variables

|

||||

|

||||

- General

|

||||

- `DOCS_BASE_URL`: The base url used by the application. Generated url's will be using this as base.

|

||||

@ -81,7 +84,6 @@ To build external URL, the server is expecting a `DOCS_BASE_URL` environment var

|

||||

- `DATABASE_URL`: The jdbc connection string to be used by `hibernate`.

|

||||

- `DATABASE_USER`: The user which should be used for the database connection.

|

||||

- `DATABASE_PASSWORD`: The password to be used for the database connection.

|

||||

- `DATABASE_POOL_SIZE`: The pool size to be used for the database connection.

|

||||

|

||||

- Language

|

||||

- `DOCS_DEFAULT_LANGUAGE`: The language which will be used as default. Currently supported values are:

|

||||

@ -93,19 +95,41 @@ To build external URL, the server is expecting a `DOCS_BASE_URL` environment var

|

||||

- `DOCS_SMTP_USERNAME`: The username to be used.

|

||||

- `DOCS_SMTP_PASSWORD`: The password to be used.

|

||||

|

||||

## Examples

|

||||

### Examples

|

||||

|

||||

In the following examples some passwords are exposed in cleartext. This was done in order to keep the examples simple. We strongly encourage you to use variables with an `.env` file or other means to securely store your passwords.

|

||||

|

||||

|

||||

### Default, using PostgreSQL

|

||||

#### Using the internal database

|

||||

|

||||

```yaml

|

||||

version: '3'

|

||||

services:

|

||||

# Teedy Application

|

||||

teedy-server:

|

||||

image: sismics/docs:v1.11

|

||||

image: sismics/docs:v1.8

|

||||

restart: unless-stopped

|

||||

ports:

|

||||

# Map internal port to host

|

||||

- 8080:8080

|

||||

environment:

|

||||

# Base url to be used

|

||||

DOCS_BASE_URL: "https://docs.example.com"

|

||||

# Set the admin email

|

||||

DOCS_ADMIN_EMAIL_INIT: "admin@example.com"

|

||||

# Set the admin password (in this example: "superSecure")

|

||||

DOCS_ADMIN_PASSWORD_INIT: "$$2a$$05$$PcMNUbJvsk7QHFSfEIDaIOjk1VI9/E7IPjTKx.jkjPxkx2EOKSoPS"

|

||||

volumes:

|

||||

- ./docs/data:/data

|

||||

```

|

||||

|

||||

#### Using PostgreSQL

|

||||

|

||||

```yaml

|

||||

version: '3'

|

||||

services:

|

||||

# Teedy Application

|

||||

teedy-server:

|

||||

image: sismics/docs:v1.8

|

||||

restart: unless-stopped

|

||||

ports:

|

||||

# Map internal port to host

|

||||

@ -123,7 +147,6 @@ services:

|

||||

DATABASE_URL: "jdbc:postgresql://teedy-db:5432/teedy"

|

||||

DATABASE_USER: "teedy_db_user"

|

||||

DATABASE_PASSWORD: "teedy_db_password"

|

||||

DATABASE_POOL_SIZE: "10"

|

||||

volumes:

|

||||

- ./docs/data:/data

|

||||

networks:

|

||||

@ -157,47 +180,26 @@ networks:

|

||||

driver: bridge

|

||||

```

|

||||

|

||||

### Using the internal database (only for testing)

|

||||

Manual installation

|

||||

-------------------

|

||||

|

||||

```yaml

|

||||

version: '3'

|

||||

services:

|

||||

# Teedy Application

|

||||

teedy-server:

|

||||

image: sismics/docs:v1.11

|

||||

restart: unless-stopped

|

||||

ports:

|

||||

# Map internal port to host

|

||||

- 8080:8080

|

||||

environment:

|

||||

# Base url to be used

|

||||

DOCS_BASE_URL: "https://docs.example.com"

|

||||

# Set the admin email

|

||||

DOCS_ADMIN_EMAIL_INIT: "admin@example.com"

|

||||

# Set the admin password (in this example: "superSecure")

|

||||

DOCS_ADMIN_PASSWORD_INIT: "$$2a$$05$$PcMNUbJvsk7QHFSfEIDaIOjk1VI9/E7IPjTKx.jkjPxkx2EOKSoPS"

|

||||

volumes:

|

||||

- ./docs/data:/data

|

||||

```

|

||||

#### Requirements

|

||||

|

||||

# Manual installation

|

||||

|

||||

## Requirements

|

||||

|

||||

- Java 11

|

||||

- Tesseract 4 for OCR

|

||||

- Java 8 with the [Java Cryptography Extension](http://www.oracle.com/technetwork/java/javase/downloads/jce-7-download-432124.html)

|

||||

- Tesseract 3 or 4 for OCR

|

||||

- ffmpeg for video thumbnails

|

||||

- mediainfo for video metadata extraction

|

||||

- A webapp server like [Jetty](http://eclipse.org/jetty/) or [Tomcat](http://tomcat.apache.org/)

|

||||

|

||||

## Download

|

||||

#### Download

|

||||

|

||||

The latest release is downloadable here: <https://github.com/sismics/docs/releases> in WAR format.

|

||||

**The default admin password is "admin". Don't forget to change it before going to production.**

|

||||

|

||||

## How to build Teedy from the sources

|

||||

How to build Teedy from the sources

|

||||

----------------------------------

|

||||

|

||||

Prerequisites: JDK 11, Maven 3, NPM, Grunt, Tesseract 4

|

||||

Prerequisites: JDK 8 with JCE, Maven 3, NPM, Grunt, Tesseract 3 or 4

|

||||

|

||||

Teedy is organized in several Maven modules:

|

||||

|

||||

@ -208,39 +210,35 @@ Teedy is organized in several Maven modules:

|

||||

First off, clone the repository: `git clone git://github.com/sismics/docs.git`

|

||||

or download the sources from GitHub.

|

||||

|

||||

### Launch the build

|

||||

#### Launch the build

|

||||

|

||||

From the root directory:

|

||||

|

||||

```console

|

||||

mvn clean -DskipTests install

|

||||

```

|

||||

mvn clean -DskipTests install

|

||||

|

||||

### Run a stand-alone version

|

||||

#### Run a stand-alone version

|

||||

|

||||

From the `docs-web` directory:

|

||||

|

||||

```console

|

||||

mvn jetty:run

|

||||

```

|

||||

mvn jetty:run

|

||||

|

||||

### Build a .war to deploy to your servlet container

|

||||

#### Build a .war to deploy to your servlet container

|

||||

|

||||

From the `docs-web` directory:

|

||||

|

||||

```console

|

||||

mvn -Pprod -DskipTests clean install

|

||||

```

|

||||

mvn -Pprod -DskipTests clean install

|

||||

|

||||

You will get your deployable WAR in the `docs-web/target` directory.

|

||||

|

||||

# Contributing

|

||||

Contributing

|

||||

------------

|

||||

|

||||

All contributions are more than welcomed. Contributions may close an issue, fix a bug (reported or not reported), improve the existing code, add new feature, and so on.

|

||||

|

||||

The `master` branch is the default and base branch for the project. It is used for development and all Pull Requests should go there.

|

||||

|

||||

# License

|

||||

License

|

||||

-------

|

||||

|

||||

Teedy is released under the terms of the GPL license. See `COPYING` for more

|

||||

information or see <http://opensource.org/licenses/GPL-2.0>.

|

||||

|

||||

@ -1,18 +0,0 @@

|

||||

version: '3'

|

||||

services:

|

||||

# Teedy Application

|

||||

teedy-server:

|

||||

image: sismics/docs:v1.10

|

||||

restart: unless-stopped

|

||||

ports:

|

||||

# Map internal port to host

|

||||

- 8080:8080

|

||||

environment:

|

||||

# Base url to be used

|

||||

DOCS_BASE_URL: "https://docs.example.com"

|

||||

# Set the admin email

|

||||

DOCS_ADMIN_EMAIL_INIT: "admin@example.com"

|

||||

# Set the admin password (in this example: "superSecure")

|

||||

DOCS_ADMIN_PASSWORD_INIT: "$$2a$$05$$PcMNUbJvsk7QHFSfEIDaIOjk1VI9/E7IPjTKx.jkjPxkx2EOKSoPS"

|

||||

volumes:

|

||||

- ./docs/data:/data

|

||||

@ -5,8 +5,8 @@

|

||||

<parent>

|

||||

<groupId>com.sismics.docs</groupId>

|

||||

<artifactId>docs-parent</artifactId>

|

||||

<version>1.12-SNAPSHOT</version>

|

||||

<relativePath>../pom.xml</relativePath>

|

||||

<version>1.9</version>

|

||||

<relativePath>..</relativePath>

|

||||

</parent>

|

||||

|

||||

<modelVersion>4.0.0</modelVersion>

|

||||

@ -17,10 +17,20 @@

|

||||

<dependencies>

|

||||

<!-- Persistence layer dependencies -->

|

||||

<dependency>

|

||||

<groupId>org.hibernate.orm</groupId>

|

||||

<groupId>org.hibernate</groupId>

|

||||

<artifactId>hibernate-core</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>org.hibernate</groupId>

|

||||

<artifactId>hibernate-entitymanager</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>org.hibernate</groupId>

|

||||

<artifactId>hibernate-c3p0</artifactId>

|

||||

</dependency>

|

||||

|

||||

<!-- Other external dependencies -->

|

||||

<dependency>

|

||||

<groupId>joda-time</groupId>

|

||||

@ -38,8 +48,8 @@

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>org.apache.commons</groupId>

|

||||

<artifactId>commons-lang3</artifactId>

|

||||

<groupId>commons-lang</groupId>

|

||||

<artifactId>commons-lang</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

@ -53,8 +63,8 @@

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>jakarta.json</groupId>

|

||||

<artifactId>jakarta.json-api</artifactId>

|

||||

<groupId>org.glassfish</groupId>

|

||||

<artifactId>javax.json</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

@ -85,6 +95,7 @@

|

||||

<dependency>

|

||||

<groupId>at.favre.lib</groupId>

|

||||

<artifactId>bcrypt</artifactId>

|

||||

<version>0.9.0</version>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

@ -112,6 +123,11 @@

|

||||

<artifactId>lucene-highlighter</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>com.sun.mail</groupId>

|

||||

<artifactId>javax.mail</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>com.squareup.okhttp3</groupId>

|

||||

<artifactId>okhttp</artifactId>

|

||||

@ -119,12 +135,7 @@

|

||||

|

||||

<dependency>

|

||||

<groupId>org.apache.directory.api</groupId>

|

||||

<artifactId>api-ldap-client-api</artifactId>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>org.apache.directory.api</groupId>

|

||||

<artifactId>api-ldap-codec-standalone</artifactId>

|

||||

<artifactId>api-all</artifactId>

|

||||

</dependency>

|

||||

|

||||

<!-- Only there to read old index and rebuild them -->

|

||||

@ -185,6 +196,25 @@

|

||||

<artifactId>postgresql</artifactId>

|

||||

</dependency>

|

||||

|

||||

<!-- JDK 11 JAXB dependencies -->

|

||||

<dependency>

|

||||

<groupId>javax.xml.bind</groupId>

|

||||

<artifactId>jaxb-api</artifactId>

|

||||

<version>2.3.0</version>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>com.sun.xml.bind</groupId>

|

||||

<artifactId>jaxb-core</artifactId>

|

||||

<version>2.3.0</version>

|

||||

</dependency>

|

||||

|

||||

<dependency>

|

||||

<groupId>com.sun.xml.bind</groupId>

|

||||

<artifactId>jaxb-impl</artifactId>

|

||||

<version>2.3.0</version>

|

||||

</dependency>

|

||||

|

||||

<!-- Test dependencies -->

|

||||

<dependency>

|

||||

<groupId>junit</groupId>

|

||||

|

||||

@ -20,11 +20,6 @@ public enum ConfigType {

|

||||

*/

|

||||

GUEST_LOGIN,

|

||||

|

||||

/**

|

||||

* OCR enabled.

|

||||

*/

|

||||

OCR_ENABLED,

|

||||

|

||||

/**

|

||||

* Default language.

|

||||

*/

|

||||

@ -45,7 +40,6 @@ public enum ConfigType {

|

||||

INBOX_ENABLED,

|

||||

INBOX_HOSTNAME,

|

||||

INBOX_PORT,

|

||||

INBOX_STARTTLS,

|

||||

INBOX_USERNAME,

|

||||

INBOX_PASSWORD,

|

||||

INBOX_FOLDER,

|

||||

@ -59,7 +53,6 @@ public enum ConfigType {

|

||||

LDAP_ENABLED,

|

||||

LDAP_HOST,

|

||||

LDAP_PORT,

|

||||

LDAP_USESSL,

|

||||

LDAP_ADMIN_DN,

|

||||

LDAP_ADMIN_PASSWORD,

|

||||

LDAP_BASE_DN,

|

||||

|

||||

@ -43,7 +43,7 @@ public class Constants {

|

||||

/**

|

||||

* Supported document languages.

|

||||

*/

|

||||

public static final List<String> SUPPORTED_LANGUAGES = Lists.newArrayList("eng", "fra", "ita", "deu", "spa", "por", "pol", "rus", "ukr", "ara", "hin", "chi_sim", "chi_tra", "jpn", "tha", "kor", "nld", "tur", "heb", "hun", "fin", "swe", "lav", "dan", "nor", "vie", "ces", "sqi");

|

||||

public static final List<String> SUPPORTED_LANGUAGES = Lists.newArrayList("eng", "fra", "ita", "deu", "spa", "por", "pol", "rus", "ukr", "ara", "hin", "chi_sim", "chi_tra", "jpn", "tha", "kor", "nld", "tur", "heb", "hun", "fin", "swe", "lav", "dan", "nor");

|

||||

|

||||

/**

|

||||

* Base URL environment variable.

|

||||

|

||||

@ -10,8 +10,8 @@ import com.sismics.docs.core.util.AuditLogUtil;

|

||||

import com.sismics.docs.core.util.SecurityUtil;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.ArrayList;

|

||||

import java.util.Date;

|

||||

import java.util.List;

|

||||

|

||||

@ -12,7 +12,7 @@ import com.sismics.docs.core.util.jpa.QueryParam;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import javax.persistence.EntityManager;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.*;

|

||||

|

||||

|

||||

@ -4,8 +4,8 @@ import com.sismics.docs.core.model.jpa.AuthenticationToken;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

import org.joda.time.DateTime;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.Date;

|

||||

import java.util.List;

|

||||

import java.util.UUID;

|

||||

|

||||

@ -6,9 +6,9 @@ import com.sismics.docs.core.model.jpa.Comment;

|

||||

import com.sismics.docs.core.util.AuditLogUtil;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.ArrayList;

|

||||

import java.util.Date;

|

||||

|

||||

@ -4,8 +4,8 @@ import com.sismics.docs.core.constant.ConfigType;

|

||||

import com.sismics.docs.core.model.jpa.Config;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

|

||||

/**

|

||||

* Configuration parameter DAO.

|

||||

|

||||

@ -4,8 +4,8 @@ import com.sismics.docs.core.dao.dto.ContributorDto;

|

||||

import com.sismics.docs.core.model.jpa.Contributor;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.ArrayList;

|

||||

import java.util.List;

|

||||

import java.util.UUID;

|

||||

|

||||

@ -7,10 +7,9 @@ import com.sismics.docs.core.model.jpa.Document;

|

||||

import com.sismics.docs.core.util.AuditLogUtil;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import jakarta.persistence.TypedQuery;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.Date;

|

||||

import java.util.List;

|

||||

@ -51,9 +50,10 @@ public class DocumentDao {

|

||||

* @param limit Limit

|

||||

* @return List of documents

|

||||

*/

|

||||

@SuppressWarnings("unchecked")

|

||||

public List<Document> findAll(int offset, int limit) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<Document> q = em.createQuery("select d from Document d where d.deleteDate is null", Document.class);

|

||||

Query q = em.createQuery("select d from Document d where d.deleteDate is null");

|

||||

q.setFirstResult(offset);

|

||||

q.setMaxResults(limit);

|

||||

return q.getResultList();

|

||||

@ -65,9 +65,10 @@ public class DocumentDao {

|

||||

* @param userId User ID

|

||||

* @return List of documents

|

||||

*/

|

||||

@SuppressWarnings("unchecked")

|

||||

public List<Document> findByUserId(String userId) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<Document> q = em.createQuery("select d from Document d where d.userId = :userId and d.deleteDate is null", Document.class);

|

||||

Query q = em.createQuery("select d from Document d where d.userId = :userId and d.deleteDate is null");

|

||||

q.setParameter("userId", userId);

|

||||

return q.getResultList();

|

||||

}

|

||||

@ -87,7 +88,7 @@ public class DocumentDao {

|

||||

}

|

||||

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

StringBuilder sb = new StringBuilder("select distinct d.DOC_ID_C, d.DOC_TITLE_C, d.DOC_DESCRIPTION_C, d.DOC_SUBJECT_C, d.DOC_IDENTIFIER_C, d.DOC_PUBLISHER_C, d.DOC_FORMAT_C, d.DOC_SOURCE_C, d.DOC_TYPE_C, d.DOC_COVERAGE_C, d.DOC_RIGHTS_C, d.DOC_CREATEDATE_D, d.DOC_UPDATEDATE_D, d.DOC_LANGUAGE_C, d.DOC_IDFILE_C,");

|

||||

StringBuilder sb = new StringBuilder("select distinct d.DOC_ID_C, d.DOC_TITLE_C, d.DOC_DESCRIPTION_C, d.DOC_SUBJECT_C, d.DOC_IDENTIFIER_C, d.DOC_PUBLISHER_C, d.DOC_FORMAT_C, d.DOC_SOURCE_C, d.DOC_TYPE_C, d.DOC_COVERAGE_C, d.DOC_RIGHTS_C, d.DOC_CREATEDATE_D, d.DOC_UPDATEDATE_D, d.DOC_LANGUAGE_C, ");

|

||||

sb.append(" (select count(s.SHA_ID_C) from T_SHARE s, T_ACL ac where ac.ACL_SOURCEID_C = d.DOC_ID_C and ac.ACL_TARGETID_C = s.SHA_ID_C and ac.ACL_DELETEDATE_D is null and s.SHA_DELETEDATE_D is null) shareCount, ");

|

||||

sb.append(" (select count(f.FIL_ID_C) from T_FILE f where f.FIL_DELETEDATE_D is null and f.FIL_IDDOC_C = d.DOC_ID_C) fileCount, ");

|

||||

sb.append(" u.USE_USERNAME_C ");

|

||||

@ -121,7 +122,6 @@ public class DocumentDao {

|

||||

documentDto.setCreateTimestamp(((Timestamp) o[i++]).getTime());

|

||||

documentDto.setUpdateTimestamp(((Timestamp) o[i++]).getTime());

|

||||

documentDto.setLanguage((String) o[i++]);

|

||||

documentDto.setFileId((String) o[i++]);

|

||||

documentDto.setShared(((Number) o[i++]).intValue() > 0);

|

||||

documentDto.setFileCount(((Number) o[i++]).intValue());

|

||||

documentDto.setCreator((String) o[i]);

|

||||

@ -138,16 +138,16 @@ public class DocumentDao {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

|

||||

// Get the document

|

||||

TypedQuery<Document> dq = em.createQuery("select d from Document d where d.id = :id and d.deleteDate is null", Document.class);

|

||||

dq.setParameter("id", id);

|

||||

Document documentDb = dq.getSingleResult();

|

||||

Query q = em.createQuery("select d from Document d where d.id = :id and d.deleteDate is null");

|

||||

q.setParameter("id", id);

|

||||

Document documentDb = (Document) q.getSingleResult();

|

||||

|

||||

// Delete the document

|

||||

Date dateNow = new Date();

|

||||

documentDb.setDeleteDate(dateNow);

|

||||

|

||||

// Delete linked data

|

||||

Query q = em.createQuery("update File f set f.deleteDate = :dateNow where f.documentId = :documentId and f.deleteDate is null");

|

||||

q = em.createQuery("update File f set f.deleteDate = :dateNow where f.documentId = :documentId and f.deleteDate is null");

|

||||

q.setParameter("documentId", id);

|

||||

q.setParameter("dateNow", dateNow);

|

||||

q.executeUpdate();

|

||||

@ -179,10 +179,10 @@ public class DocumentDao {

|

||||

*/

|

||||

public Document getById(String id) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<Document> q = em.createQuery("select d from Document d where d.id = :id and d.deleteDate is null", Document.class);

|

||||

Query q = em.createQuery("select d from Document d where d.id = :id and d.deleteDate is null");

|

||||

q.setParameter("id", id);

|

||||

try {

|

||||

return q.getSingleResult();

|

||||

return (Document) q.getSingleResult();

|

||||

} catch (NoResultException e) {

|

||||

return null;

|

||||

}

|

||||

@ -199,9 +199,9 @@ public class DocumentDao {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

|

||||

// Get the document

|

||||

TypedQuery<Document> q = em.createQuery("select d from Document d where d.id = :id and d.deleteDate is null", Document.class);

|

||||

Query q = em.createQuery("select d from Document d where d.id = :id and d.deleteDate is null");

|

||||

q.setParameter("id", document.getId());

|

||||

Document documentDb = q.getSingleResult();

|

||||

Document documentDb = (Document) q.getSingleResult();

|

||||

|

||||

// Update the document

|

||||

documentDb.setTitle(document.getTitle());

|

||||

@ -237,6 +237,7 @@ public class DocumentDao {

|

||||

query.setParameter("fileId", document.getFileId());

|

||||

query.setParameter("id", document.getId());

|

||||

query.executeUpdate();

|

||||

|

||||

}

|

||||

|

||||

/**

|

||||

|

||||

@ -5,8 +5,8 @@ import com.sismics.docs.core.dao.dto.DocumentMetadataDto;

|

||||

import com.sismics.docs.core.model.jpa.DocumentMetadata;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.ArrayList;

|

||||

import java.util.List;

|

||||

import java.util.UUID;

|

||||

|

||||

@ -4,16 +4,12 @@ import com.sismics.docs.core.constant.AuditLogType;

|

||||

import com.sismics.docs.core.model.jpa.File;

|

||||

import com.sismics.docs.core.util.AuditLogUtil;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import jakarta.persistence.TypedQuery;

|

||||

|

||||

import java.util.Collections;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.util.Date;

|

||||

import java.util.HashMap;

|

||||

import java.util.List;

|

||||

import java.util.Map;

|

||||

import java.util.UUID;

|

||||

|

||||

/**

|

||||

@ -51,9 +47,10 @@ public class FileDao {

|

||||

* @param limit Limit

|

||||

* @return List of files

|

||||

*/

|

||||

@SuppressWarnings("unchecked")

|

||||

public List<File> findAll(int offset, int limit) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.deleteDate is null", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.deleteDate is null");

|

||||

q.setFirstResult(offset);

|

||||

q.setMaxResults(limit);

|

||||

return q.getResultList();

|

||||

@ -65,38 +62,28 @@ public class FileDao {

|

||||

* @param userId User ID

|

||||

* @return List of files

|

||||

*/

|

||||

@SuppressWarnings("unchecked")

|

||||

public List<File> findByUserId(String userId) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.userId = :userId and f.deleteDate is null", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.userId = :userId and f.deleteDate is null");

|

||||

q.setParameter("userId", userId);

|

||||

return q.getResultList();

|

||||

}

|

||||

|

||||

/**

|

||||

* Returns a list of active files.

|

||||

*

|

||||

* @param ids Files IDs

|

||||

* @return List of files

|

||||

*/

|

||||

public List<File> getFiles(List<String> ids) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.id in :ids and f.deleteDate is null", File.class);

|

||||

q.setParameter("ids", ids);

|

||||

return q.getResultList();

|

||||

}

|

||||

|

||||

/**

|

||||

* Returns an active file or null.

|

||||

* Returns an active file.

|

||||

*

|

||||

* @param id File ID

|

||||

* @return File

|

||||

* @return Document

|

||||

*/

|

||||

public File getFile(String id) {

|

||||

List<File> files = getFiles(List.of(id));

|

||||

if (files.isEmpty()) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

Query q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null");

|

||||

q.setParameter("id", id);

|

||||

try {

|

||||

return (File) q.getSingleResult();

|

||||

} catch (NoResultException e) {

|

||||

return null;

|

||||

} else {

|

||||

return files.get(0);

|

||||

}

|

||||

}

|

||||

|

||||

@ -105,15 +92,15 @@ public class FileDao {

|

||||

*

|

||||

* @param id File ID

|

||||

* @param userId User ID

|

||||

* @return File

|

||||

* @return Document

|

||||

*/

|

||||

public File getFile(String id, String userId) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.id = :id and f.userId = :userId and f.deleteDate is null", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.id = :id and f.userId = :userId and f.deleteDate is null");

|

||||

q.setParameter("id", id);

|

||||

q.setParameter("userId", userId);

|

||||

try {

|

||||

return q.getSingleResult();

|

||||

return (File) q.getSingleResult();

|

||||

} catch (NoResultException e) {

|

||||

return null;

|

||||

}

|

||||

@ -129,9 +116,9 @@ public class FileDao {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

|

||||

// Get the file

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null");

|

||||

q.setParameter("id", id);

|

||||

File fileDb = q.getSingleResult();

|

||||

File fileDb = (File) q.getSingleResult();

|

||||

|

||||

// Delete the file

|

||||

Date dateNow = new Date();

|

||||

@ -151,9 +138,9 @@ public class FileDao {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

|

||||

// Get the file

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null");

|

||||

q.setParameter("id", file.getId());

|

||||

File fileDb = q.getSingleResult();

|

||||

File fileDb = (File) q.getSingleResult();

|

||||

|

||||

// Update the file

|

||||

fileDb.setDocumentId(file.getDocumentId());

|

||||

@ -163,7 +150,6 @@ public class FileDao {

|

||||

fileDb.setMimeType(file.getMimeType());

|

||||

fileDb.setVersionId(file.getVersionId());

|

||||

fileDb.setLatestVersion(file.isLatestVersion());

|

||||

fileDb.setSize(file.getSize());

|

||||

|

||||

return file;

|

||||

}

|

||||

@ -176,82 +162,46 @@ public class FileDao {

|

||||

*/

|

||||

public File getActiveById(String id) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.id = :id and f.deleteDate is null");

|

||||

q.setParameter("id", id);

|

||||

try {

|

||||

return q.getSingleResult();

|

||||

return (File) q.getSingleResult();

|

||||

} catch (NoResultException e) {

|

||||

return null;

|

||||

}

|

||||

}

|

||||

|

||||

/**

|

||||

* Get files by document ID or all orphan files of a user.

|

||||

* Get files by document ID or all orphan files of an user.

|

||||

*

|

||||

* @param userId User ID

|

||||

* @param documentId Document ID

|

||||

* @return List of files

|

||||

*/

|

||||

@SuppressWarnings("unchecked")

|

||||

public List<File> getByDocumentId(String userId, String documentId) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

if (documentId == null) {

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.documentId is null and f.deleteDate is null and f.latestVersion = true and f.userId = :userId order by f.createDate asc", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.documentId is null and f.deleteDate is null and f.latestVersion = true and f.userId = :userId order by f.createDate asc");

|

||||

q.setParameter("userId", userId);

|

||||

return q.getResultList();

|

||||

} else {

|

||||

return getByDocumentsIds(Collections.singleton(documentId));

|

||||

}

|

||||

}

|

||||

|

||||

/**

|

||||

* Get files by documents IDs.

|

||||

*

|

||||

* @param documentIds Documents IDs

|

||||

* @return List of files

|

||||

*/

|

||||

public List<File> getByDocumentsIds(Iterable<String> documentIds) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.documentId in :documentIds and f.latestVersion = true and f.deleteDate is null order by f.order asc", File.class);

|

||||

q.setParameter("documentIds", documentIds);

|

||||

Query q = em.createQuery("select f from File f where f.documentId = :documentId and f.latestVersion = true and f.deleteDate is null order by f.order asc");

|

||||

q.setParameter("documentId", documentId);

|

||||

return q.getResultList();

|

||||

}

|

||||

|

||||

/**

|

||||

* Get files count by documents IDs.

|

||||

*

|

||||

* @param documentIds Documents IDs

|

||||

* @return the number of files per document id

|

||||

*/

|

||||

public Map<String, Long> countByDocumentsIds(Iterable<String> documentIds) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

Query q = em.createQuery("select f.documentId, count(*) from File f where f.documentId in :documentIds and f.latestVersion = true and f.deleteDate is null group by (f.documentId)");

|

||||

q.setParameter("documentIds", documentIds);

|

||||

Map<String, Long> result = new HashMap<>();

|

||||

q.getResultList().forEach(o -> {

|

||||

Object[] resultLine = (Object[]) o;

|

||||

result.put((String) resultLine[0], (Long) resultLine[1]);

|

||||

});

|

||||

return result;

|

||||

}

|

||||

|

||||

/**

|

||||

* Get all files from a version.

|

||||

*

|

||||

* @param versionId Version ID

|

||||

* @return List of files

|

||||

*/

|

||||

@SuppressWarnings("unchecked")

|

||||

public List<File> getByVersionId(String versionId) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.versionId = :versionId and f.deleteDate is null order by f.order asc", File.class);

|

||||

Query q = em.createQuery("select f from File f where f.versionId = :versionId and f.deleteDate is null order by f.order asc");

|

||||

q.setParameter("versionId", versionId);

|

||||

return q.getResultList();

|

||||

}

|

||||

|

||||

public List<File> getFilesWithUnknownSize(int limit) {

|

||||

EntityManager em = ThreadLocalContext.get().getEntityManager();

|

||||

TypedQuery<File> q = em.createQuery("select f from File f where f.size = :size and f.deleteDate is null order by f.order asc", File.class);

|

||||

q.setParameter("size", File.UNKNOWN_SIZE);

|

||||

q.setMaxResults(limit);

|

||||

return q.getResultList();

|

||||

}

|

||||

}

|

||||

|

||||

@ -12,9 +12,9 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.util.*;

|

||||

|

||||

/**

|

||||

@ -184,8 +184,10 @@ public class GroupDao {

|

||||

|

||||

criteriaList.add("g.GRP_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -12,9 +12,9 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.util.*;

|

||||

|

||||

/**

|

||||

@ -123,8 +123,10 @@ public class MetadataDao {

|

||||

|

||||

criteriaList.add("m.MET_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -6,9 +6,9 @@ import com.sismics.util.context.ThreadLocalContext;

|

||||

import org.joda.time.DateTime;

|

||||

import org.joda.time.DurationFieldType;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.util.Date;

|

||||

import java.util.UUID;

|

||||

|

||||

|

||||

@ -4,8 +4,8 @@ import com.sismics.docs.core.dao.dto.RelationDto;

|

||||

import com.sismics.docs.core.model.jpa.Relation;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.*;

|

||||

|

||||

/**

|

||||

|

||||

@ -3,8 +3,8 @@ package com.sismics.docs.core.dao;

|

||||

import com.google.common.collect.Sets;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.Set;

|

||||

|

||||

/**

|

||||

|

||||

@ -11,7 +11,7 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import javax.persistence.EntityManager;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.*;

|

||||

|

||||

@ -64,8 +64,10 @@ public class RouteDao {

|

||||

}

|

||||

criteriaList.add("r.RTE_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -12,9 +12,9 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.*;

|

||||

|

||||

@ -145,8 +145,10 @@ public class RouteModelDao {

|

||||

|

||||

criteriaList.add("rm.RTM_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -12,8 +12,8 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.*;

|

||||

|

||||

@ -90,8 +90,10 @@ public class RouteStepDao {

|

||||

}

|

||||

criteriaList.add("rs.RTP_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -3,8 +3,8 @@ package com.sismics.docs.core.dao;

|

||||

import com.sismics.docs.core.model.jpa.Share;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.Query;

|

||||

import java.util.Date;

|

||||

import java.util.UUID;

|

||||

|

||||

@ -19,6 +19,7 @@ public class ShareDao {

|

||||

*

|

||||

* @param share Share

|

||||

* @return New ID

|

||||

* @throws Exception

|

||||

*/

|

||||

public String create(Share share) {

|

||||

// Create the UUID

|

||||

|

||||

@ -13,9 +13,9 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.util.*;

|

||||

|

||||

/**

|

||||

@ -199,8 +199,10 @@ public class TagDao {

|

||||

|

||||

criteriaList.add("t.TAG_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -1,7 +1,6 @@

|

||||

package com.sismics.docs.core.dao;

|

||||

|

||||

import com.google.common.base.Joiner;

|

||||

import com.google.common.base.Strings;

|

||||

import at.favre.lib.crypto.bcrypt.BCrypt;

|

||||

import org.joda.time.DateTime;

|

||||

import org.slf4j.Logger;

|

||||

@ -19,9 +18,9 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.*;

|

||||

|

||||

@ -290,7 +289,7 @@ public class UserDao {

|

||||

private String hashPassword(String password) {

|

||||

int bcryptWork = Constants.DEFAULT_BCRYPT_WORK;

|

||||

String envBcryptWork = System.getenv(Constants.BCRYPT_WORK_ENV);

|

||||

if (!Strings.isNullOrEmpty(envBcryptWork)) {

|

||||

if (envBcryptWork != null) {

|

||||

try {

|

||||

int envBcryptWorkInt = Integer.parseInt(envBcryptWork);

|

||||

if (envBcryptWorkInt >= 4 && envBcryptWorkInt <= 31) {

|

||||

|

||||

@ -3,9 +3,9 @@ package com.sismics.docs.core.dao;

|

||||

import com.sismics.docs.core.model.jpa.Vocabulary;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.util.List;

|

||||

import java.util.UUID;

|

||||

|

||||

@ -20,6 +20,7 @@ public class VocabularyDao {

|

||||

*

|

||||

* @param vocabulary Vocabulary

|

||||

* @return New ID

|

||||

* @throws Exception

|

||||

*/

|

||||

public String create(Vocabulary vocabulary) {

|

||||

// Create the UUID

|

||||

|

||||

@ -9,9 +9,9 @@ import com.sismics.docs.core.util.jpa.QueryUtil;

|

||||

import com.sismics.docs.core.util.jpa.SortCriteria;

|

||||

import com.sismics.util.context.ThreadLocalContext;

|

||||

|

||||

import jakarta.persistence.EntityManager;

|

||||

import jakarta.persistence.NoResultException;

|

||||

import jakarta.persistence.Query;

|

||||

import javax.persistence.EntityManager;

|

||||

import javax.persistence.NoResultException;

|

||||

import javax.persistence.Query;

|

||||

import java.sql.Timestamp;

|

||||

import java.util.*;

|

||||

|

||||

@ -42,8 +42,10 @@ public class WebhookDao {

|

||||

}

|

||||

criteriaList.add("w.WHK_DELETEDATE_D is null");

|

||||

|

||||

if (!criteriaList.isEmpty()) {

|

||||

sb.append(" where ");

|

||||

sb.append(Joiner.on(" and ").join(criteriaList));

|

||||

}

|

||||

|

||||

// Perform the search

|

||||

QueryParam queryParam = QueryUtil.getSortedQueryParam(new QueryParam(sb.toString(), parameterMap), sortCriteria);

|

||||

|

||||

@ -1,6 +1,5 @@

|

||||

package com.sismics.docs.core.dao.criteria;

|

||||

|

||||

import java.util.ArrayList;

|

||||

import java.util.Date;

|

||||

import java.util.List;

|

||||

|

||||

@ -19,7 +18,7 @@ public class DocumentCriteria {

|

||||

/**

|

||||

* Search query.

|

||||

*/

|

||||

private String simpleSearch;

|

||||

private String search;

|

||||

|

||||

/**

|

||||

* Full content search query.

|

||||

@ -50,13 +49,13 @@ public class DocumentCriteria {

|

||||

* Tag IDs.

|

||||

* The first level list will be AND'ed and the second level list will be OR'ed.

|

||||

*/

|

||||

private List<List<String>> tagIdList = new ArrayList<>();

|

||||

private List<List<String>> tagIdList;

|

||||

|

||||

/**

|

||||

* Tag IDs to exclude.

|

||||

* Tag IDs to excluded.

|

||||

* The first and second level list will be excluded.

|

||||

*/

|

||||

private List<List<String>> excludedTagIdList = new ArrayList<>();

|

||||

private List<List<String>> excludedTagIdList;

|

||||

|

||||

/**

|

||||

* Shared status.

|

||||

@ -83,11 +82,6 @@ public class DocumentCriteria {

|

||||

*/

|

||||

private String mimeType;

|

||||

|

||||

/**

|

||||

* Titles to include.

|

||||

*/

|

||||

private List<String> titleList = new ArrayList<>();

|

||||

|

||||

public List<String> getTargetIdList() {

|

||||

return targetIdList;

|

||||

}

|

||||

@ -96,12 +90,12 @@ public class DocumentCriteria {

|

||||

this.targetIdList = targetIdList;

|

||||

}

|

||||

|

||||

public String getSimpleSearch() {

|

||||